AI Asset Maturity

Translated from French with the help of AI – please excuse any linguistic nuances.

Translated from French with the help of AI – please excuse any linguistic nuances.

AI Asset Maturity

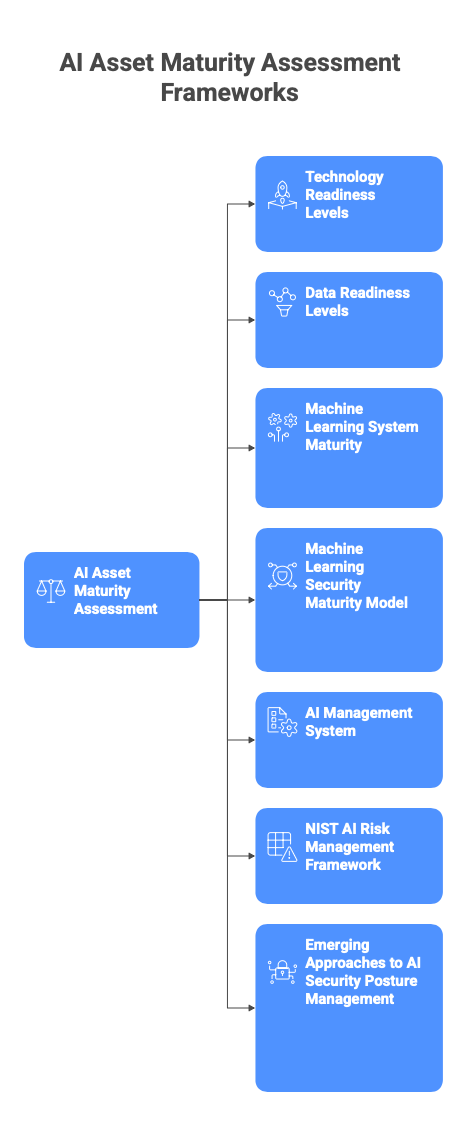

This document provides an in-depth analysis of the main frameworks for assessing the maturity of AI assets. It includes concrete examples for driving the maturity of data, models, prompts, pipelines, agents, and AI systems. The objective is not to introduce a new framework, but to build on the many existing references. This list is not exhaustive:

- Technology Readiness Levels (Technology Readiness Levels (TRL))

- Data Readiness Levels (arxiv-Neil D. Lawrence-2017)

- Machine Learning system maturity (MLTRL / TRL4ML Arxiv-Alexander Lavin et al.-2022)

- Machine Learning Security Maturity Model (MLSMM) Arxiv-Felix Viktor Jedrzejewski et al.-2023)

- AI Management System (ISO/IEC 42001 ISO)

- NIST AI Risk Management Framework (AI RMF)(NIST)

- Emerging approaches to AI Security Posture Management (AISPM)

Yes, I’m adding three more levels… At this point, our collection of “RLs” is seriously starting to look like a maturity framework Pokedex… let’s just say we’re adding Pokémon evolutions.

Although this document draws on established frameworks (Technology Readiness Levels – TRL, Data Readiness Levels – DRL, Machine Learning Technology Readiness Levels – MLTRL, etc.), the three dimensions below are not simply “new” frameworks invented here but rather adaptations of existing ones:

-

SecRL (Security Readiness Levels): the European MultiRATE project explicitly describes a 9-level Security Readiness Level (Sec RL) scale, aligned with other readiness scales (TRL, IRL, CRL…) to assess the maturity of the security of a technology or asset. In parallel, work on “AI Security Maturity Models” clearly identifies the “AI security” dimension as an independent maturity axis (security controls, monitoring, adversarial robustness…). (m4d.iti.gr) In this document, SecRL is proposed as an adaptation at the level of AI assets.

-

GovRL (Governance Readiness Levels): although the exact term “GovRL” is not widely used in the literature (it is probably coined here), the underlying idea is strongly supported by several studies: for example, the AI Governance Maturity Model (IEEE-USA) uses a maturity scorecard for AI governance, based on the NIST AI Risk Management Framework (AI RMF)(IEEE-USA). Commercial and market models also describe “AI Governance Maturity Models” covering levels of adoption, documentation, oversight, and AI governance (zendata.dev). Here, GovRL is proposed as an asset-centric version of this AI governance: we measure not only the “organizational” AI governance, but also the maturity of governance for each individual AI asset.

-

OpsRL (Operations Readiness Levels): here too, the term “OpsRL” is not commonly used; the notion of “Operational Readiness” (preparation of an organization or system to move into production) is well documented (Wikipedia). In our framework, OpsRL refers to the operational maturity of an AI asset (pipeline, model, infrastructure): from an “experimental” prototype to a fully operated, monitored, automated, and scalable service.

SecRL, GovRL, and OpsRL thus fit into a continuum of readiness/maturity, complementing the technological (TRL/MLTRL), data (DRL), and operational (MLOps/ops-readiness) axes. Their addition strengthens coverage of the spectrum: not only “Is the technology ready?”, but also “Is this technology secure? And well governed?” …

Many “recent” AI assets—for example, generative models, autonomous agents, or multi-domain AI components—rely on technologies with high generality. Yet according to AI Watch, “the more specialized an AI technology is, the easier it is to climb the TRL ladder… but as soon as it becomes too general, the higher levels remain as unattainable as a level-99 final boss.” (AI Watch) … even if these assets are already exposed to end users, it is reasonable to estimate that they sit in the TRL 3 to 7 zone rather than TRL 8 or 9, due to complexity, the need for multi-task robustness, and still insufficiently proven operational conditions.

Why measure the maturity of AI assets?

Several dynamics now structure every AI project: the race for use cases, the real maturity of assets, and the human dimension of governance. They explain why some projects take off … and why so many others remain stuck on the runway.

Projects move faster than the foundations

Organizations are already combining scoring models, recommendation engines, operational optimization, generative models, chatbots, RAG, agents, and exploratory projects within business teams (often outside official channels).

Yet many projects remain stuck in POC for lack of ready data, MLOps industrialization, or sufficient risk framing. “AI” incidents (data leaks, inappropriate responses, bias) often share the same soil: poorly mastered data, unmonitored models, diffuse responsibilities (Hyperproof). Finally, regulatory (AI Act, sector-specific requirements) and normative (ISO 42001, NIST AI RMF) frameworks demand increased traceability and governance of AI systems.(ISO)

Even though TRLs give a view of system maturity, they do not indicate how well the data are mastered, how the models were designed, tested, and documented, what the AI security level is, who owns the risk on a daily basis, or the state of operations (pipeline, infrastructure, monitoring).

Value for research, tech, business, and marketing

DRL and MLTRL were designed to structure the transformation of research results into usable systems (arxiv-Neil D. Lawrence-2017). A maturity grid for AI assets makes it possible to prioritize work on data (raising the DRL) as much as on models, to avoid producing “unindustrializable by design” demonstrators, and to identify strategic AI assets (proprietary datasets, robust models) that deserve reinforced investment.

For Data / ML / Engineering / Ops teams, the DRL, MRL, and OpsRL frameworks help prioritize industrialization initiatives (feature store, pipelines, monitoring, observability)(digital.nemko.com). SecRL provides a language for integrating AI security requirements (adversarial testing, security posture, AISPM) into technical roadmaps. And GovRL aligns technical efforts with the compliance trajectory (ISO 42001, NIST AI RMF, AI Act).(ISO)

For an Executive Committee or business leadership, TRL answers the question: “Is this solution ready to be tested, deployed, or scaled?” AI asset maturity answers: “What does it rest on? What is the foundation? Can it be reused elsewhere? Is it mastered?”

And finally, the ability to demonstrate data provenance, prove the existence of controls, and provide an AI-BOM (still very – VERY – rarely used) / AI asset fact sheets becomes a value proposition in B2B/B2G relationships and a credibility factor in the field of “responsible AI.”

Human and corporate policy dimension

AI asset maturity is not just a matter of reference frameworks; it is a question of power (who decides?), responsibility (who signs?), and the ability to say no (or “not yet”).

“AI asset maturity isn’t just about frameworks: it’s about who decides, who signs… and who dares to say no.” In short, until we clarify that, we’re not managing AI: we’re just passing the hot potato around, and in the end everyone swears it wasn’t their hand that got burned.

It is illusory to want to centralize everything in a single role (“heroic Model Owner”). Responsibility must be shared, explicit, and connected to existing decision-making bodies (risk committees, architecture, business).

Overview of existing frameworks (watch)

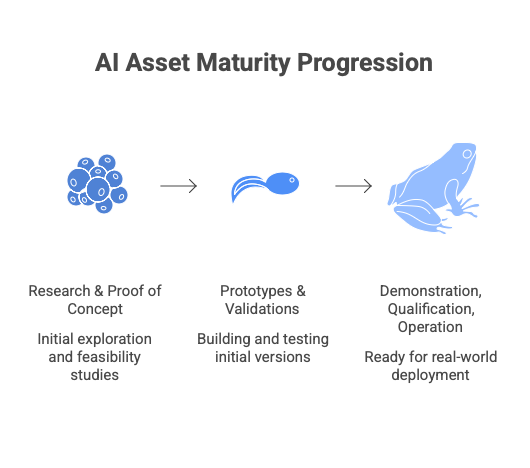

TRL – Technology Readiness Levels

The standard for technological maturity of systems (1–9), used in aerospace, defense, European programs, etc. It can be broken into 3 main parts:

- research and proof of concept (TRL 1 to 3);

- prototypes and validations (TRL 4 to 6);

- demonstration, qualification, operation (TRL 7 to 9).

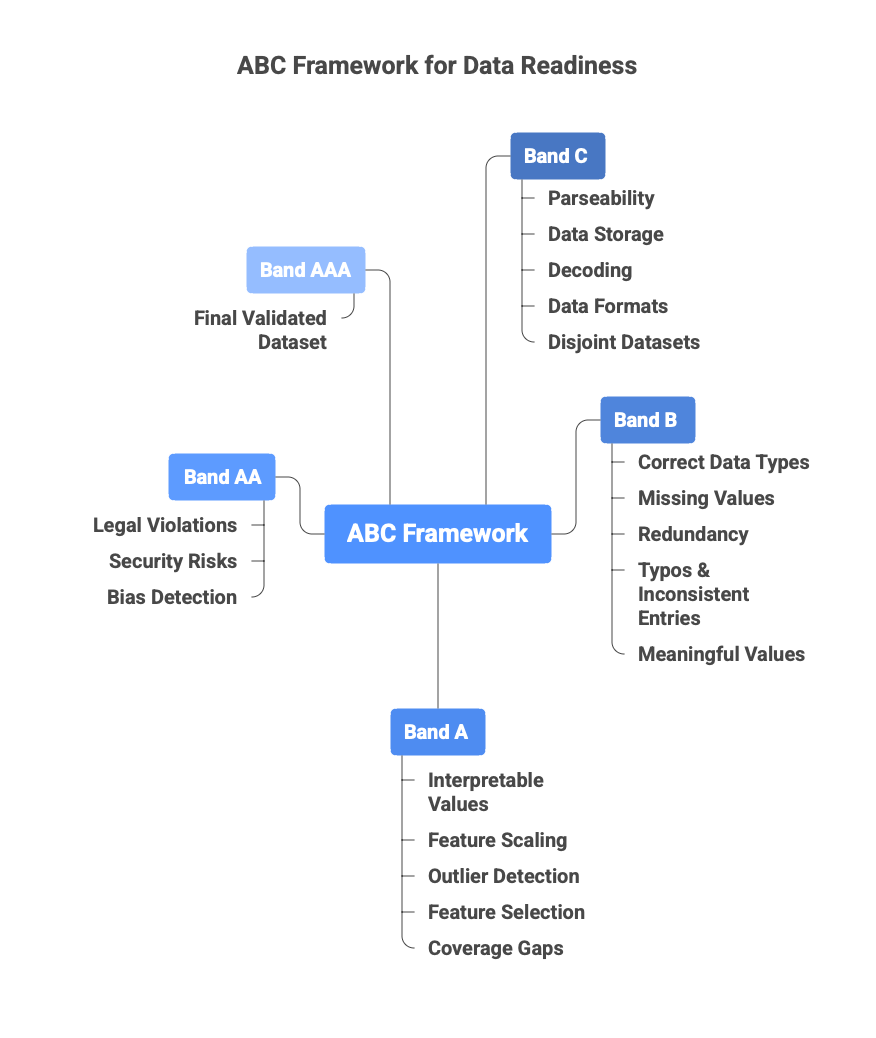

DRL – Data Readiness Levels

Proposed by Neil Lawrence (2017), DRL distinguishes three “bands” (C/B/A) to describe data accessibility (from simple hypothesis to loaded data), data quality/validity (cleaning, exploration, representativeness), and data utility for a specific task (format, context, compliance). Each band can be subdivided (e.g., C4→C1, B3→B1, A3→A1) for greater granularity. (arxiv-Neil D. Lawrence-2017)

This grid creates a common language: “where are my data?”, assesses remaining efforts before modeling or exploitation, and better plans resources (data engineering, cleaning, compliance, etc.). Although the framework is generic, community work has begun to propose more operational versions (checklists, criteria per level) in certain sectors or contexts (e.g., NLP data-readiness documentation); however, a universal “per-sector” validated reference remains to be consolidated, so use these adaptations with caution.

Recent work refines these bands into operational criteria, by sector.(pure.tue.nl)

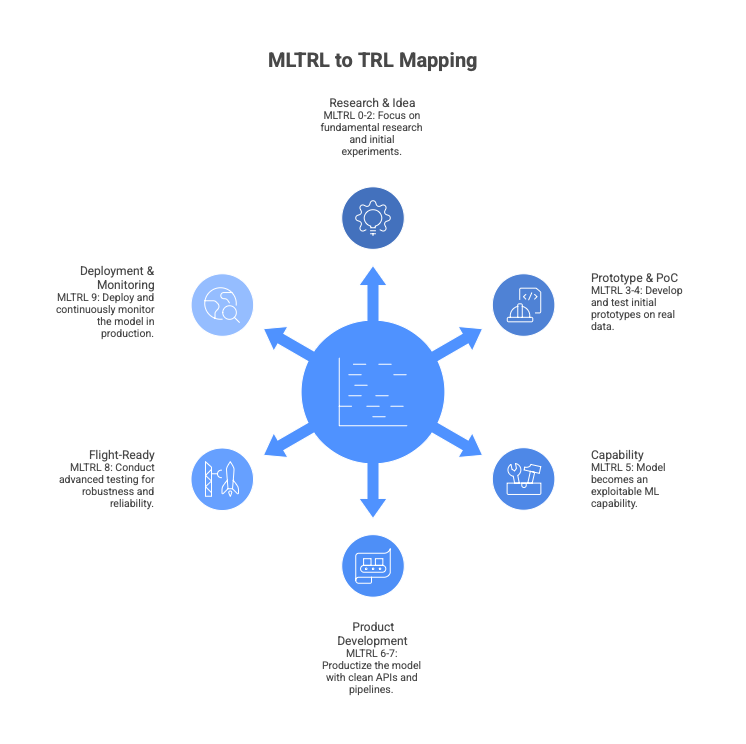

MLTRL – Machine Learning Technology Readiness Levels

MLTRL transposes TRLs to the lifecycle of ML systems.(Arxiv-Alexander Lavin et al.-2022)

To understand, we can map MLTRL to TRL:

- TRL 0-2 → MLTRL 0–2: Research, Idea → principles → first experiments, often on simulated or partial data.

- TRL 3-4 → MLTRL 3–4: Prototype & Proof-of-Concept, prototype code, first integrations, PoC on real data.

- TRL 5 → MLTRL 5: Capability, the model becomes an exploitable ML capability, handed over to engineering.

- TRL 6–7 → MLTRL 6–7: Product development + Integration, productization, clean APIs, real pipelines, tests, CI/CD, quality.

- TRL 8 → MLTRL 8: Flight-ready, advanced testing: A/B, shadow, canary, data-drift, robustness.

- TRL 9 → MLTRL 9: Deployment + continuous monitoring, deployment, ML observability, data drift, improvement loop.

MLSMM – Machine Learning Security Maturity Model

The Machine Learning Security Maturity Model (MLSMM) is a maturity model proposed to evaluate and improve security practices in the development of machine-learning-based systems. It is a “light” and domain-agnostic framework designed to fill the gap in ML-specific security models compared with traditional software development approaches.

It is a conceptual prototype, not yet empirically validated at scale.

ISO/IEC 42001 – AI Management System (AIMS)

This is an international certifiable standard dedicated to responsible AI management. It defines the requirements of an artificial intelligence management system. It requires any organization (company, administration, association) that develops, supplies, or uses AI systems to implement a comprehensive framework covering: mapping of AI risks throughout the lifecycle, impact assessment, governance, traceability of data and models, measurement of ethical and technical performance, incident management, and continuous improvement. Designed to be compatible with the NIST AI RMF and the EU AI Act, it is rapidly becoming the reference for “responsible AI” certifications and is already required in certain public tenders and B2G/B2B contracts. (ISO)

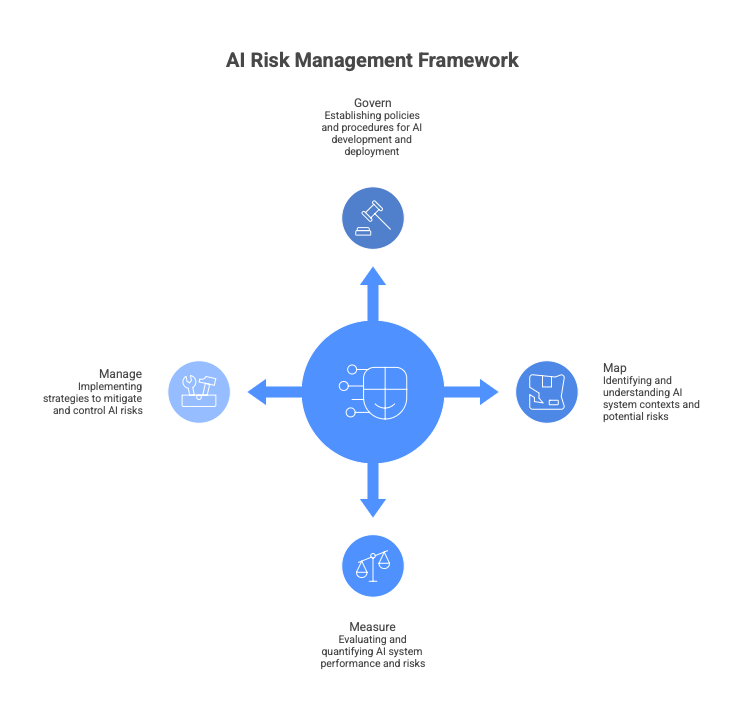

NIST AI RMF

This framework is designed as a voluntary, non-binding guide (unlike the AI Act). It provides a structured and pragmatic methodology for identifying, assessing, prioritizing, and managing risks associated with AI systems throughout their lifecycle.

Organized around four core functions: Govern (governance), Map (map context and risks), Measure (measure and evaluate), Manage (manage and mitigate). It places particular emphasis on emerging risks such as bias, privacy loss, adversarial security, hallucinations, or societal impacts. Widely adopted by American and international companies.

It serves as a compliance foundation for many regulations and is explicitly recognized as compatible with ISO/IEC 42001 and the EU AI Act. In practice, it has become the “common language” of AI risk management in the English-speaking world and beyond.(NIST)

DataOps / MLOps / DevOps / SecOps

Recent analyses converge: many models remain at the prototype stage for lack of robust pipelines. MLOps involves non-negligible costs (in-house platform or assembly of building blocks), amplified by GPU costs, the need to manage multiple model generations, and auditability requirements.

Readiness Level frameworks are a bit like a family dinner in the countryside: there’s more livestock than guests and the smell stings your nose at first, but you get used to it.

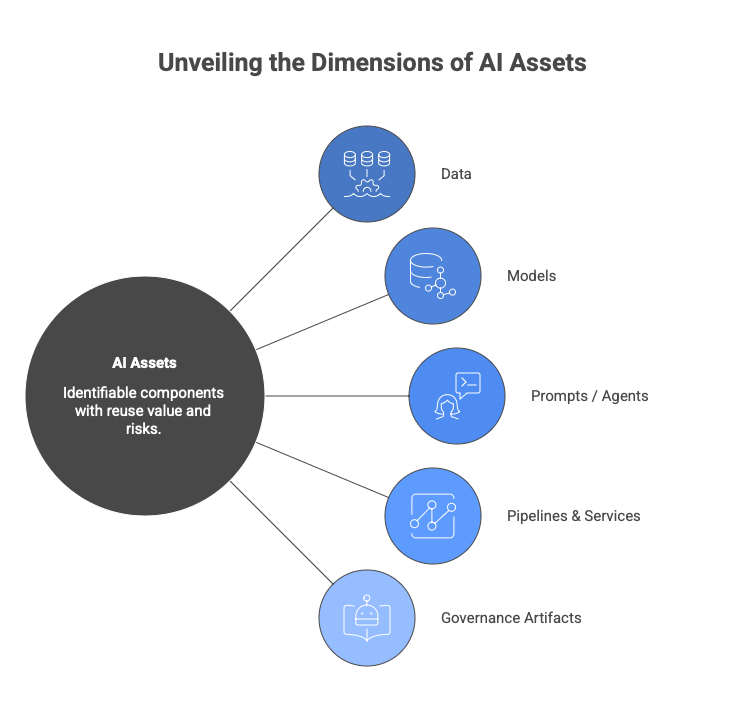

Focus on AI assets and the reality of an inventory

An AI asset can be considered any identifiable component with potential reuse value and carrying specific risks.

Typically:

- data: sources, datasets, features;

- models: internal, open source, third-party, fine-tuned;

- prompts / agents: system prompts, workflows, agents;

- pipelines & services: training, inference, orchestration;

- governance artifacts: AI-BOM, model fact sheets, AI risk registers.

Implementing an exhaustive inventory is rarely realistic in the short term due to dispersion (notebooks, Git repos, SaaS tools, local scripts), diversity of practices (shadow AI across different teams), and the continuous updating effort that is difficult to fund over time. Even for “classic” software, maintaining a complete SBOM remains a challenge.

These difficulties do not mean we should do nothing. We can imagine a targeted and progressive inventory, first focusing on high-stakes systems (AI with customer/citizen impact, high financial impact, high risk under the AI Act). Then working in waves. Have a data schema (asset type, owner, dependency links, exposure, synthetic maturity). Ultimately try to automate.

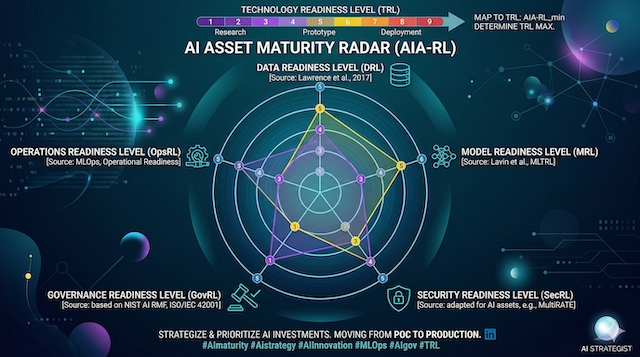

AI asset maturity and articulation with system TRL

To build this AI Asset RL (AIA-RL), we associate 5 levels (where 1 = totally informal, 5 = industrial, certifiable, reusable at group scale) with existing frameworks. The overall AIA-RL of an asset or system is always the AIA-RL_min = the lowest score among the five dimensions (DRL, MRL, SecRL, GovRL, OpsRL).

How each major existing framework is condensed into each AIA-RL dimension

DRL: data maturity (source: Data Readiness Levels – Neil Lawrence 2017 + operational adaptations)

- AIA-RL 1: band C (hypothetical or inaccessible), data exists “in someone’s head” or in a personal unshared file.

- AIA-RL 2: band C / beginning of B (accessible but raw data), contractual access validated, source known, owner designated, but no pipeline, no systematic cleaning.

- AIA-RL 3: band B (usable data), preparation pipeline versioned, some quality indicators measured and historized, schema documented.

- AIA-RL 4: band A (data ready for industrialization), feature store or data lakehouse exists, automated quality and compliance tests, cross-project reuse possible.

- AIA-RL 5: advanced band A + certification, data are auditable, everything is RGPD/AI Act certified, versioned like code and immediately reusable by any team in the group.

MRL: model maturity (Arxiv-Alexander Lavin et al.-2022, levels 0 to 9 condensed)

- AIA-RL 1: MLTRL 0-1, idea or single non-reproducible notebook exists

- AIA-RL 2: MLTRL 2-3, code versioned, training scripted, metrics recorded, but still “research code.”

- AIA-RL 3: MLTRL 4-5, model card complete, unit tests exist, validation performed, asset officially handed over to engineering.

- AIA-RL 4: MLTRL 6-7, model registry exists, semantic versioning, automated non-regression tests, clean API.

- AIA-RL 5: MLTRL 8-9, model published in internal or public catalog, comparative benchmarks published, performance monitoring in production, continuous improvement loop in place.

SecRL: AI security maturity

- AIA-RL 1, no specific AI controls: the model is treated like classic software.

- AIA-RL 2, manual adversarial tests on a few examples, basic PyPI/HuggingFace dependency scan.

- AIA-RL 3, automated adversarial tests in CI/CD, rate limiting, basic guardrails, systematic supply-chain scan.

- AIA-RL 4, formalized red teaming, production monitoring of attacks (prompt injection, jailbreak, data poisoning), vulnerability disclosure policy.

- AIA-RL 5, complete AISPM, third-party certification of adversarial robustness, bug bounty dedicated to models, ISO 42001 annex A.8 compliance.

GovRL: governance & compliance maturity (sources: ISO/IEC 42001 + NIST AI RMF functions Govern/Measure/Map/Manage)

- AIA-RL 1, no one formally responsible, no fact sheet.

- AIA-RL 2, a business owner designated, model fact sheet partially filled.

- AIA-RL 3, model fact sheet + data fact sheet + AI risk register + formal three-way review (business/tech/risk) before each deployment.

- AIA-RL 4, AI-BOM generated automatically, approval workflow integrated into tools (Jira, ServiceNow), automated reporting to the risk committee.

- AIA-RL 5, governance integrated into the enterprise risk committee, annual external audit, automated regulatory reporting (AI Act fundamental rights impact assessment).

OpsRL: operational maturity (sources: MLOps Maturity Model Google/CMU + DataOps/SecOps practices)

- AIA-RL 1, manual deployment on a single machine.

- AIA-RL 2, versioned deployment script, accessible logs.

- AIA-RL 3, complete CI/CD, basic monitoring (latency, error rate), manual rollback.

- AIA-RL 4, canary / shadow deployment, conceptual and data drift monitoring, automatic alerts, feature flags, inference cost tracked.

- AIA-RL 5, shared group MLOps platform, internal contractual SLAs, GPU autoscaling, multi-generation model management, full-stack observability (OpenTelemetry traces).

… Associating with the TRL scale …

- AIA-RL_min = 1: maximum credible TRL = 3 (internal experimentation only)

- AIA-RL_min = 2: maximum credible TRL = 5 (very controlled pilot, never on sensitive data)

- AIA-RL_min = 3: maximum credible TRL = 7 (production possible, but limited scope and reinforced surveillance)

- AIA-RL_min = 4: maximum credible TRL = 8 (large-scale production acceptable)

- AIA-RL_min = 5: TRL 9 possible (fully operational, certifiable, reusable everywhere)

AIA-RL and TRL: the higher you go, the closer you get to the bright side of production. “Map or not to map… do it, or do not. There is no try.” (Master AIA, AIA-RL VI: The Return of the TRL-di)

By systematically applying this precise mapping and the AIA-RL_min → TRL max compatibility rule, sterile debates of the type “we are TRL 7 but it explodes in production” no longer have any reason to exist. We immediately see which existing reference (DRL, MLTRL, ISO 42001, NIST AI RMF, MLSMM, etc.) is at issue, what the priority initiative is, and above all what TRL level we can reasonably target in 6, 12, or 24 months.

Constraints, difficulties, and points of vigilance

Many organizations already have their own data maturity model, security grid, architecture reference, or in-house TRL. The AIA-TRL grid simply frames what is necessary for AI and adds a constraint on the TRL scale.

Costs and efforts: realistic ranges

Public data and field feedback show that implementing AI governance aligned with the AI Act + ISO 42001 for a large group in the first year is often in the order of one million euros (1–3 M€) if we include internal time (legal, risk, data, IT), external support, and tooling (inventories, monitoring, security).

In steady state, several hundred k€ per year (0.5–1.5 M€) are required depending on scope and degree of automation. Thus, for highly exposed players (banking, healthcare, general public), it is necessary to have a core of 3–5 internal FTEs dedicated to AI governance (coordination, legal, risk, data) and a halo of 10–15 equivalent FTEs including contributions from business, security, IT, and training/consulting providers.

In addition, MLOps costs are not negligible: developing and operating custom MLOps platforms requires initial investments estimated in the literature between ~1 and 20 M$ before recent additional costs (GPU, AI security), and in practice recent feedback suggests anticipating a 30–50% surcharge (due to the rise of LLMs, GPU shortages/prices, and auditability requirements).

In conclusion, to target GovRL / SecRL / OpsRL at 4–5 on a few critical AI systems, a seven-figure ticket must be assumed for a large group. To target 2–3 on a targeted scope, an average organization can stay within clearly lower envelopes by relying on SaaS solutions, organizational practices, and “lean” devices.

Economic orders of magnitude and scenarios

To make the framework usable, it is useful to propose cost scenarios.

“Essential” scenario (medium organization, limited resources)

Objective: bring a few key AI systems to:

- DRL / MRL / SecRL / GovRL / OpsRL ~ 2–3,

- over an 18–36 month horizon.

Order of magnitude:

-

Annual budget: approximately 150–400 k€, depending on:

- use of SaaS vs on-prem solutions,

- use of consulting,

- extent of training.

-

Resources:

- 0.5–1 FTE for AI/governance coordination,

- occasional contributions from existing teams.

“Core” scenario (advanced mid-cap or large BU)

Objective: structure AI asset maturity for one domain (e.g., risk, distribution):

- several dozen AI assets (models, datasets, pipelines);

- target: AIA-RL_min 3 on the most critical AI systems.

Order of magnitude:

-

Annual budget: 400 k€ – 1.2 M€ (AI governance, basic MLOps, discovery/inventory tools, light AISPM).

-

Resources:

- 2–3 FTEs dedicated to AI steering (governance, data, MLOps),

- 5–10 equivalent FTEs in team contributions.

“Extended” scenario (multi-BU group / highly exposed regulated sector)

Objective: apply the grid to several hundred AI assets, high-risk AI systems, multi-jurisdictional, targeting AIA-RL 3–4 on critical systems.

Order of magnitude:

-

Annual budget: 1–3 M€ (sometimes more), including:

- extended ISO 42001 AIMS,

- MLOps / AISPM platforms,

- AI-BOM / inventory tooling,

- training and change-management programs.

-

Resources:

- 3–5 internal core AI/governance FTEs,

- 10–15 equivalent FTEs in business/IT/risk/legal contributions.

Gradual adoption trajectory

Step 1 – Build on existing TRLs

-

Identify how TRLs are used today (formal or informal).

-

Decide that every AI system must be positioned on:

- a TRL scale,

- an AI asset maturity grid (AIA-RL) at least for high-stakes systems.

Step 2 – Experiment with the AIA-RL grid on a pilot scope

-

Select 5–10 representative AI systems.

-

Evaluate DRL, MRL, SecRL, GovRL, OpsRL (guided qualitative assessment).

-

Produce:

- a TRL × AIA-RL matrix for these cases,

- a radar per system,

- associated recommendations.

Step 3 – Anchor the grid in various processes

For example:

- Project processes: required AIA-RL_min level to pass certain gates.

- Risk/compliance processes: use of GovRL / SecRL to decide the required level of review.

- Architecture/IT processes: OpsRL as acceptance criterion for deployment on certain platforms.

Step 4 – Extend, automate, refine

- Gradually expand the scope (additional AI systems).

- Automate what can be automated (asset discovery, information collection, dashboards).

- Refine grids, weightings, thresholds based on field feedback.

Conclusion

This watch highlights a landscape already rich in frameworks (TRL, DRL, MLTRL, MLSMM, ISO 42001, NIST AI RMF, AISPM, MLOps…). Integrating AI assets can be executed by reorganizing these building blocks into a common reading grid, by creating a shared language between business, tech, risk, legal, management, and by anchoring AI decisions on a clear vision of the real maturity of the assets that support them.

The AI asset maturity grid, as presented here, must be seen as an internal and modular instrument, an interface between existing references, and a steering lever rather than a control tool.

It does not promise that all systems will one day reach level 4–5, nor that organizations must invest disproportionate amounts for every use case. It proposes to focus efforts where the value/risk combination justifies it, to make explicit what is today often managed “by feel” by a few experts, and to offer decision-makers a structure for costing, prioritizing, and sequencing AI investments.

Lego V - AI Asset maturity

The opinions expressed in this article are strictly personal and do not necessarily reflect those of my employer. The content is provided for information purposes only and does not constitute legal advice. This article explores emerging architectural concepts and analyzes market trends.