From Innovation to Industrial Asset - Transforming AI Initiatives into Sustainable Value Creators

Translated from French with the help of AI – please excuse any linguistic nuances.

Translated from French with the help of AI – please excuse any linguistic nuances.

While enthusiasm for autonomous agents and state-of-the-art models remains strong, the operational reality is more nuanced. According to the MIT Media Lab (Project NANDA) report The GenAI Divide: State of AI in Business 2025, 95% of GenAI initiatives have no measurable impact on the P&L (Fortune - MIT Report: 95% of Generative AI Pilots Failing CFO Scrutiny). Gartner notes that nearly 50% of projects (GenAI project failure) are abandoned after the PoC phase, while the RAND Corporation attributes more than 80% of failures to data quality issues and inadequate infrastructure (The Root Causes of Failure for Artificial Intelligence).

Today, a new factor is amplifying the risk: regulations around traceability. The Fair Use argument is collapsing in the face of new rules that demand surgical transparency.

It is time to move from a logic of innovation-as-spectacle to the construction of industrial AI assets. This requires a holistic approach that tightly integrates technical, business, and legal/regulatory dimensions.

Why most initiatives remain stuck?

- Teams often excel at rapidly developing high-performing demonstrators (RAG, agents, optimization). These PoCs, typically positioned between TRL 3 and 5, play an essential role in exploration and quick idea validation. However, they are frequently built on fragile foundations: insufficiently qualified data, unversioned models, manual pipelines, and a lack of production observability. Without a clear strategy to mature toward TRL 6 to 9, technical debt often becomes insurmountable during scale-up.

- Many PoCs are launched without clear alignment to strategic priorities or realistic cost estimates (inference, maintenance, retraining). ROI remains theoretical, and the reusability of assets across teams or business units is rarely anticipated. The result: cost centers rather than margin-generating assets.

- With the progressive enforcement of the AI Act, traceability, risk management, transparency, and GDPR compliance are becoming non-negotiable requirements. Yet too many projects treat these aspects at the end of the cycle, leading to costly blocks or rework. Today, this risk is becoming systemic: Article 50 of the AI Act becomes enforceable on 2 August 2026. Model providers (GPAI) will be required to publish a detailed summary of their training data (EU AI Act 2026: New Rules for Training Data - Scalevise).

An AI asset can only create sustainable value if it is technically robust, economically viable, and legally responsible.

The radical shift in algorithmic traceability

The “Digital Omnibus” acts as a simplification “patch” to harmonize the EU AI Act, GDPR, and cybersecurity.

1. The Legal Framework: The “Digital Omnibus” Effect

The key deadline arrives on 2 August 2026: Article 50 of the AI Act becomes enforceable. Model providers (GPAI) will have to publish a detailed summary of their training data (EU AI Act 2026: New Rules for Training Data - Scalevise). In the USA, the Federal CLEAR Act (March 2026) follows Europe’s lead by requiring the deposit of an inventory of copyrighted works used in datasets with the Copyright Office (Text - S.3813 - CLEAR Act).

2. The Technical Impact: From Web Scraping to “Data Lineage”

The C2PA 2.3 standard has become the industry benchmark for digital watermarking. It is no longer optional but a necessity to prove whether content is AI-generated or not (C2PA Content Credentials 2.3 - C2PA News 2026). Protocols such as “AI-robots.txt” are now standardized. Technically, training pipelines must incorporate automatic filters that exclude sources that have activated their right of reservation. The biggest technical risk in 2026 is the Takedown Injunction: if a dataset is deemed illicit, the model must be retrained (at colossal cost), which explains the emergence of Machine Unlearning techniques (Softwareseni - EU AI Act and Content Provenance).

3. The Business Impact: The Premium on “Ethical AI”

Legislation is creating a new barrier to entry that favors giants already holding catalogs. In 2026, 76% of companies prefer to buy off-the-shelf AI solutions (SaaS) rather than train their own models, in order to outsource the model’s technical compliance (training data, transparency) to the provider (AI Training for Business 2026 - TechClass). However, the company remains legally the “Deployer”: it retains responsibility for usage, human oversight, and non-discrimination of results in its business processes. This “Clean AI” SaaS approach represents a realistic and accessible path for SMEs and mid-market companies. It allows them to succeed without the colossal resources required to build a proprietary Data Moat, while dramatically accelerating time-to-value. It is nevertheless essential to remember that using a SaaS provider does not erase the Deployer’s responsibility. The company remains fully accountable under the law for the concrete use of AI in its business processes, for implementing appropriate human oversight, for non-discrimination of results, and for overall use-case compliance.

We are seeing the emergence of a market for “premium” and “copyright-free certified” data. Adobe and Apple dominate thanks to their massive licensing agreements, while “Open” unsourced models suffer a loss of trust from legal departments. The competitive gap no longer lies in model architecture but in the ownership of an exclusive and legally clean Data Moat (Apple Intelligence vs Adobe - TradingView 2026).

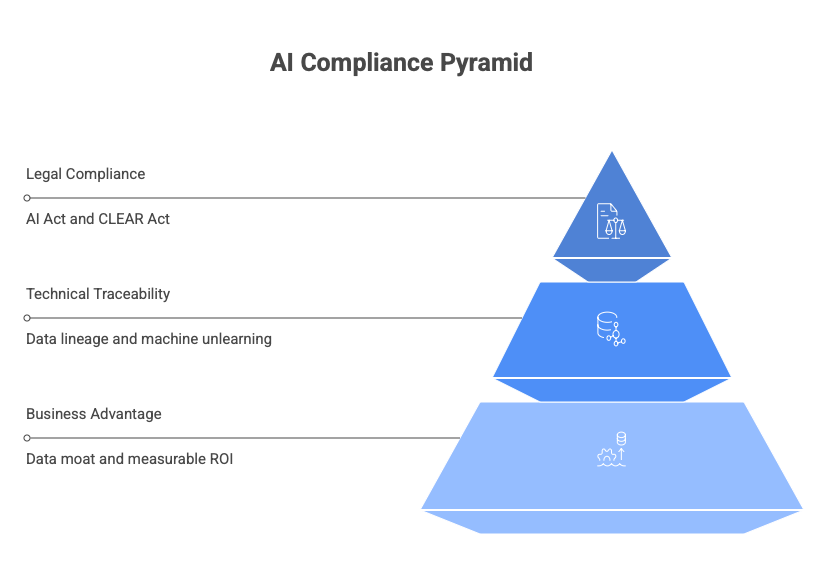

Summary: The compliance pyramid

At the top: Legal Compliance (AI Act + CLEAR Act + C2PA).

In the middle: Technical Traceability (Data Lineage + Machine Unlearning + watermarking).

At the base: Business Advantage (Buy vs Build + Data Moat + measurable ROI).

“Clean AI” has become the new “Bio”: more expensive, slower to produce, but the only way to enter the institutional market.

The concrete challenges facing on-the-ground leaders

Recent executive testimonials and analyses highlight the real obstacles organizations encounter when moving PoCs to industrial AI assets, intersecting the technical, business, and legal/regulatory dimensions.

Rob Purinton, Chief AI Officer at AdventHealth (one of the leading U.S. health systems), illustrates the tension between acceleration and governance: “AI universally compresses time, so leadership’s job is to hunt for bottlenecks and constraints, then deliberately decide where to slow down and where speed creates advantage. Governance should steer AI investment, not stall it.” This Chief AI Officer role directly integrates the technical (pipeline bottlenecks), business (investment decisions and ROI), and legal (governance to avoid regulatory risks) (Rob Purinton).

Shez Partovi, Chief Innovation Officer at Philips, focuses on the impact of the EU AI Act on innovation. He explains that large companies like Philips can absorb certification costs, but startups risk being shut out: “Companies like Philips may actually be positioned to overcome that headwind because of our size and scale, but young companies, startups, forget it.” He adds that the regulation could lead to faster innovation in North America than in Europe: “It would be a shame to bring innovation faster in the North America market than in the European market for a European company.” His role intersects technical innovation, business strategy, and legal constraints, underscoring the risk of an “innovation flight” out of Europe (CIO).

Integrating AI with legacy systems is a major brake. Older infrastructures—siloed data, historical ERPs, and mainframes—were not designed for modern AI workloads (scalability, real-time data lineage, C2PA provenance). According to a dedicated analysis of CTO challenges, these systems create data silos, security issues, and ROI constraints that seriously complicate the implementation of data lineage, C2PA watermarking, and large-scale machine unlearning, with migration and retraining costs often underestimated (https://www.gartner.com/en/articles/cio-challenges).

In mid-market companies and SMEs, the systemic risks posed by Article 50 of the AI Act (enforceable 2 August 2026, requiring a detailed summary of training data for GPAI) and provenance requirements are considered critical. Faced with the high compliance costs for “providers,” the vast majority opt for plug-and-play “Clean AI” SaaS solutions. This allows them to remain in the “deployer” position, transfer legal responsibility to the provider, limit hidden costs (including machine unlearning in the event of an injunction), and achieve rapid time-to-value.

These concrete insights from sector leaders show that successful AI industrialization requires proportionate governance that integrates the three pillars—technical (data lineage, MLOps), business (realistic ROI, Buy vs Build), and legal (C2PA traceability, Article 50 compliance)—from the earliest phases, without stifling exploratory experimentation.

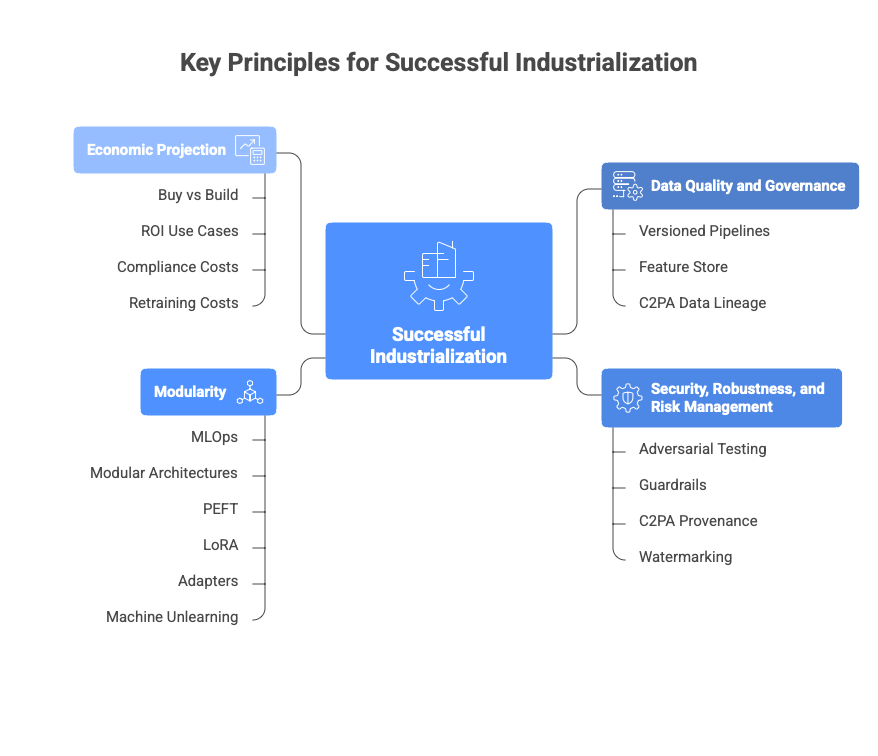

The four key principles for successful industrialization

1. Place data quality and governance at the heart of the project from the outset

Versioned pipelines + feature store + mandatory C2PA Data Lineage. Data must not only be high-quality but legally clean (Softwareseni - EU AI Act and Content Provenance).

2. Design security, robustness, and risk management “by design”

Adversarial testing + guardrails + C2PA provenance and watermarking (C2PA Content Credentials 2.3). This protects both reputation and ISO 42001 / NIST AI RMF compliance.

3. Industrialize through modularity from the first iterations

MLOps must now guarantee re-training agility. Rather than monolithic models, the new standard is the use of modular architectures (PEFT, LoRA, Adapters). These techniques allow rapid fine-tuning of a model on your own business data while retaining the “Clean” base of the original model. Above all, they provide essential flexibility in the face of regulatory requirements: in the event of a takedown injunction, surgical Machine Unlearning becomes possible, allowing specific capabilities to be unlearned or neutralized without fully retraining the model. This dramatically reduces costs, lead times, and operational risks.

4. Integrate a realistic and continuous economic projection

Before any scaling, compare Buy vs Build (76% of companies have already decided) (TechClass 2026). Prioritize high-ROI use cases while factoring in hidden compliance and potential retraining costs.

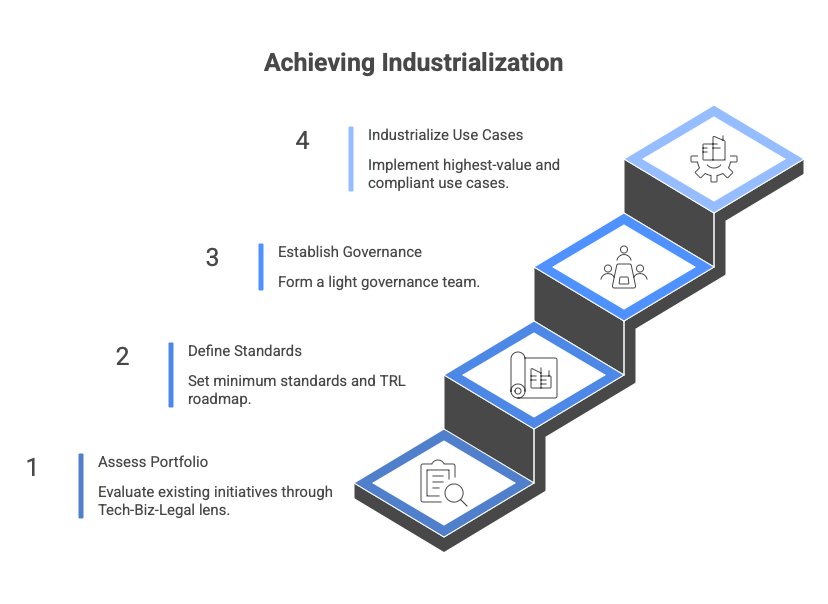

A trajectory tailored to each organization

- Quickly assess the existing portfolio of initiatives through a Tech-Biz-Legal lens and map their current TRL level.

- Define minimum standards and a clear TRL roadmap toward industrialization.

- Establish light governance involving Business, AI, IT, and Risk/Legal teams.

- Progress iteratively: industrialize first the highest-value and compliant use cases.

For large groups: shared platforms + enterprise standards.

For SMEs: hyperscaler managed services + “Clean AI” SaaS solutions for rapid, risk-free time-to-value.

Conclusion

The industrialization of AI assets must no longer be a final step but a requirement set from the design phase. Today, tightly integrating the technical, business, and legal dimensions while respecting TRL maturity logic is no longer optional: it is the condition for survival.

It is essential to avoid ending up with a “toxic” model that you can no longer deploy in Europe. “Clean AI” has become the new “Bio”: it is more expensive, slower to produce, but the only way to enter the institutional market.

Lego VI - From innovation to Industrial asset

The opinions expressed in this article are strictly personal and do not necessarily reflect those of my employer. The content is provided for information purposes only and does not constitute legal advice. This article explores emerging architectural concepts and analyzes market trends (Gartner, Forrester, Scalevise, C2PA, European Parliament, MIT NANDA). The technological solutions cited are given as examples and do not prejudge the technological choices or partnerships of my employer.